Synthehicle: Multi-Target Multi-Camera Tracking in Virtual Cities

Fabian Herzog * Junpeng Chen Torben Teepe Johannes Gilg Stefan Hörmann Gerhard Rigoll

Technical University of Munich, Germany

Abstract

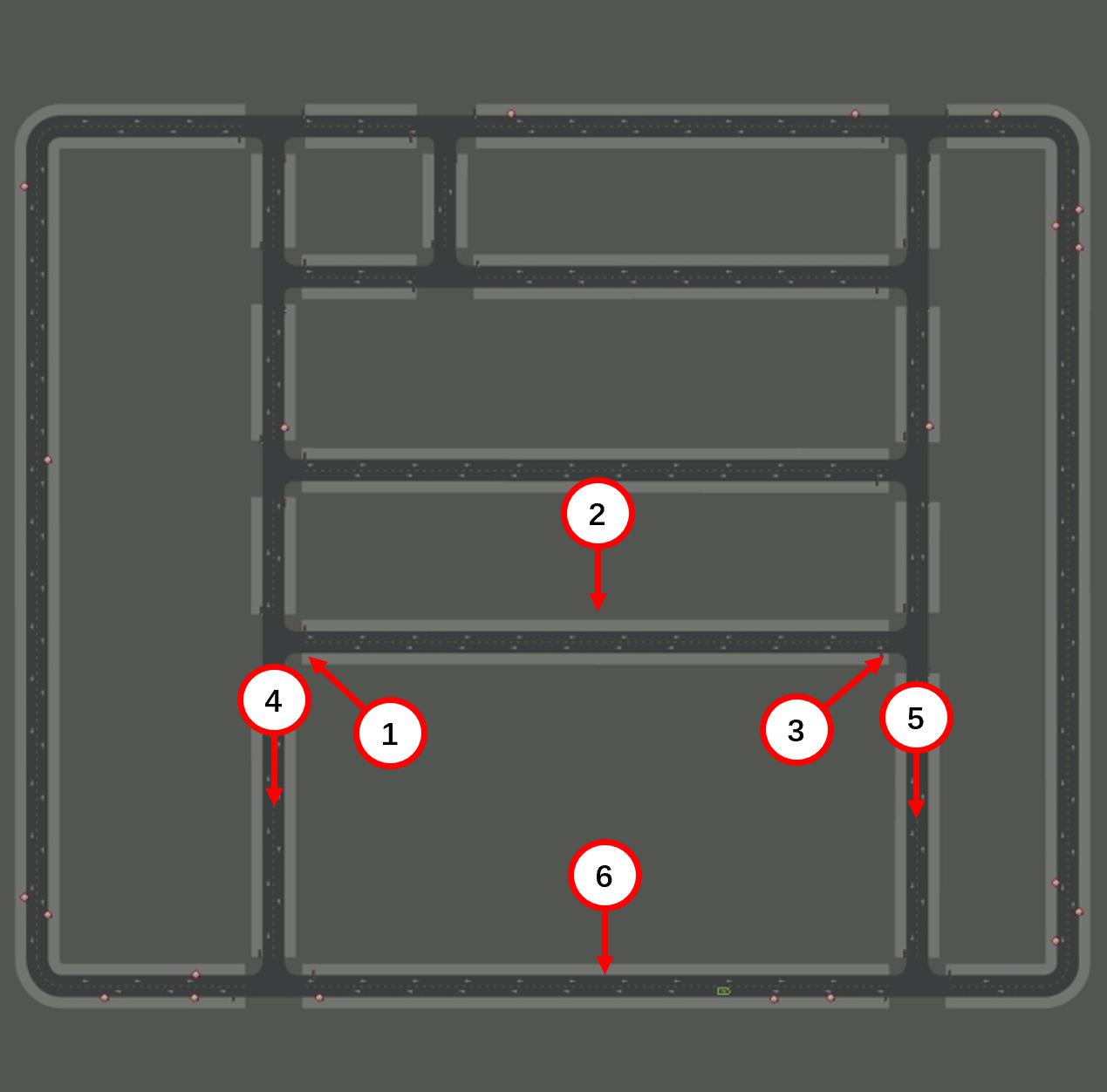

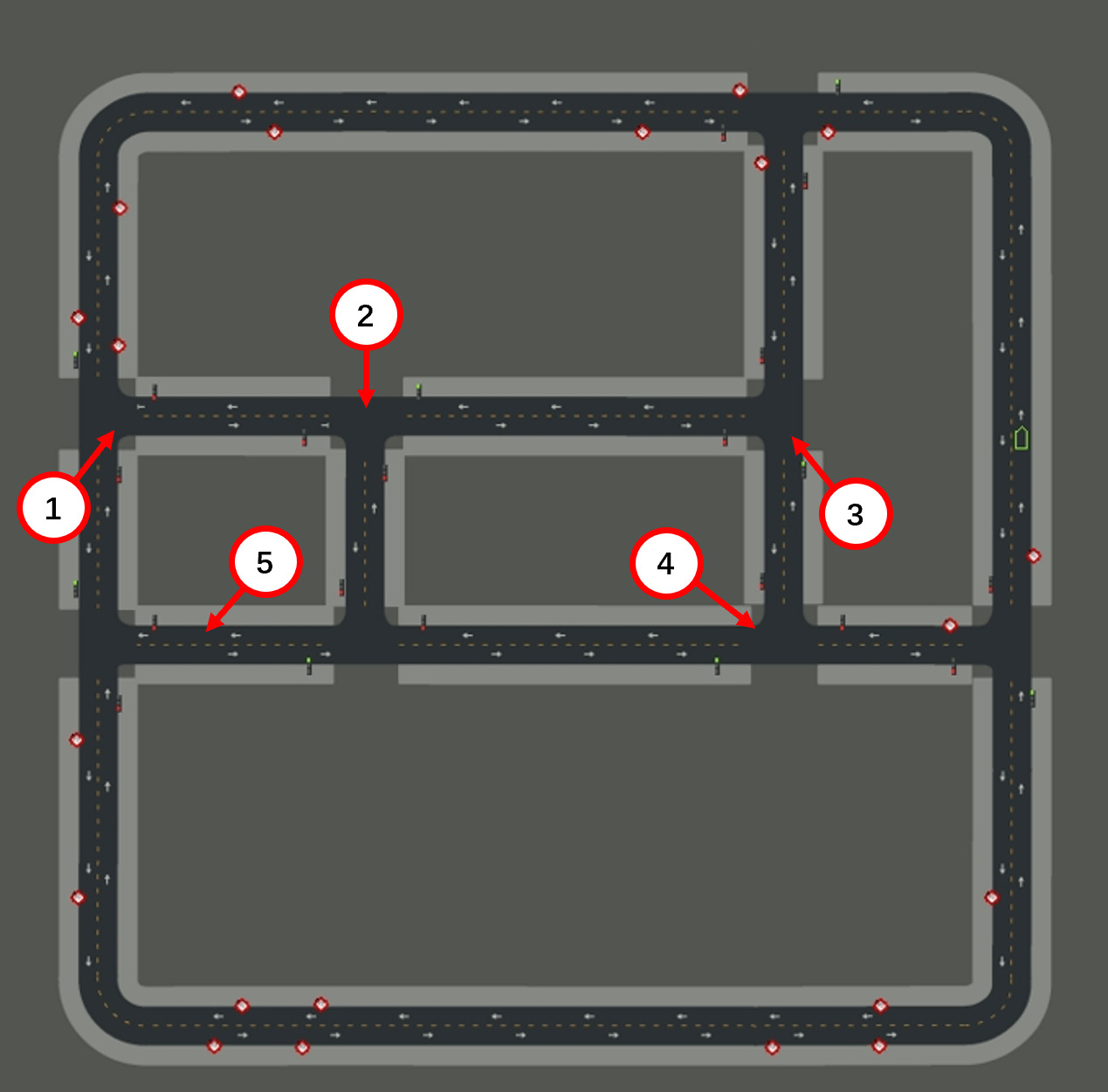

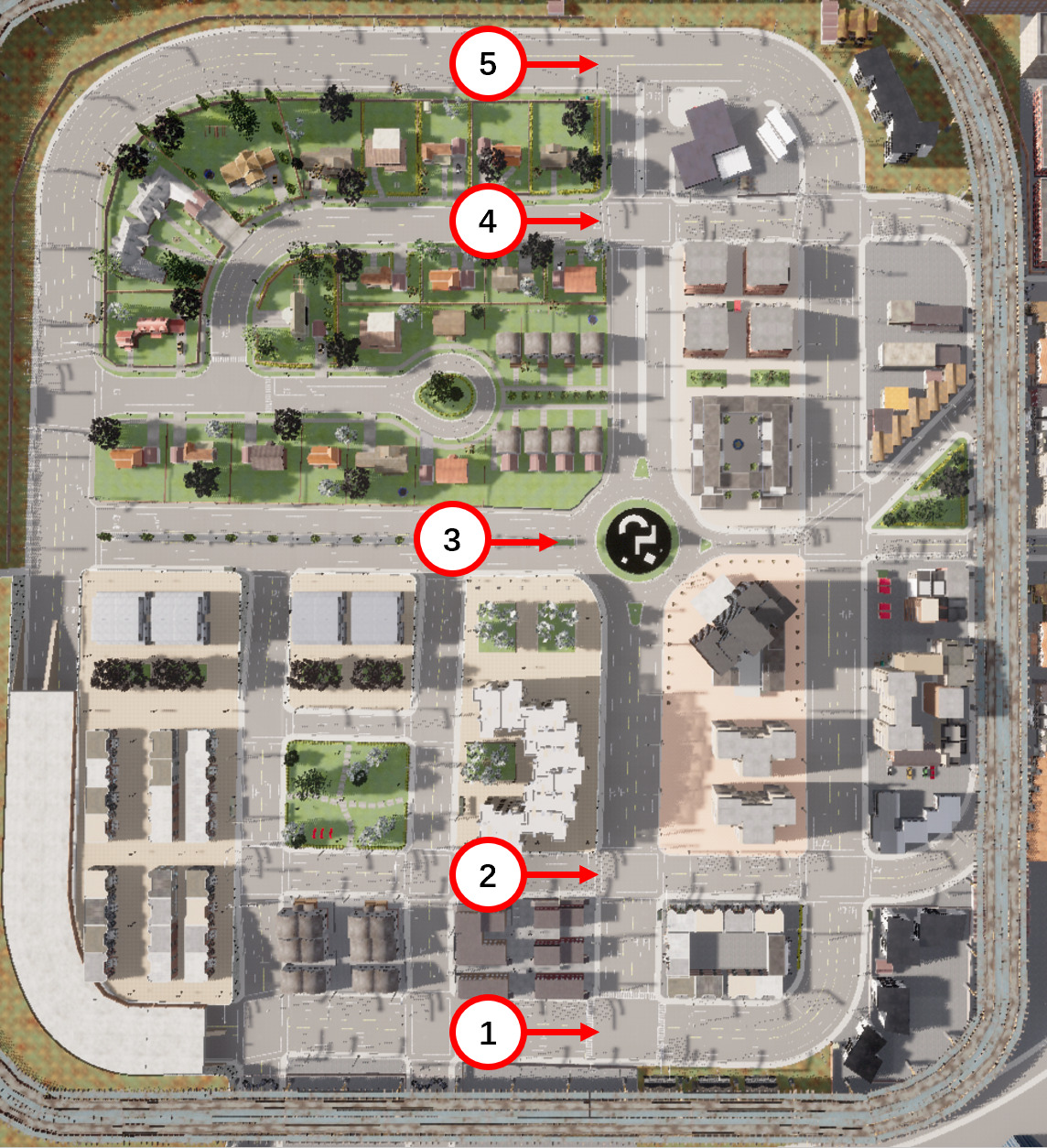

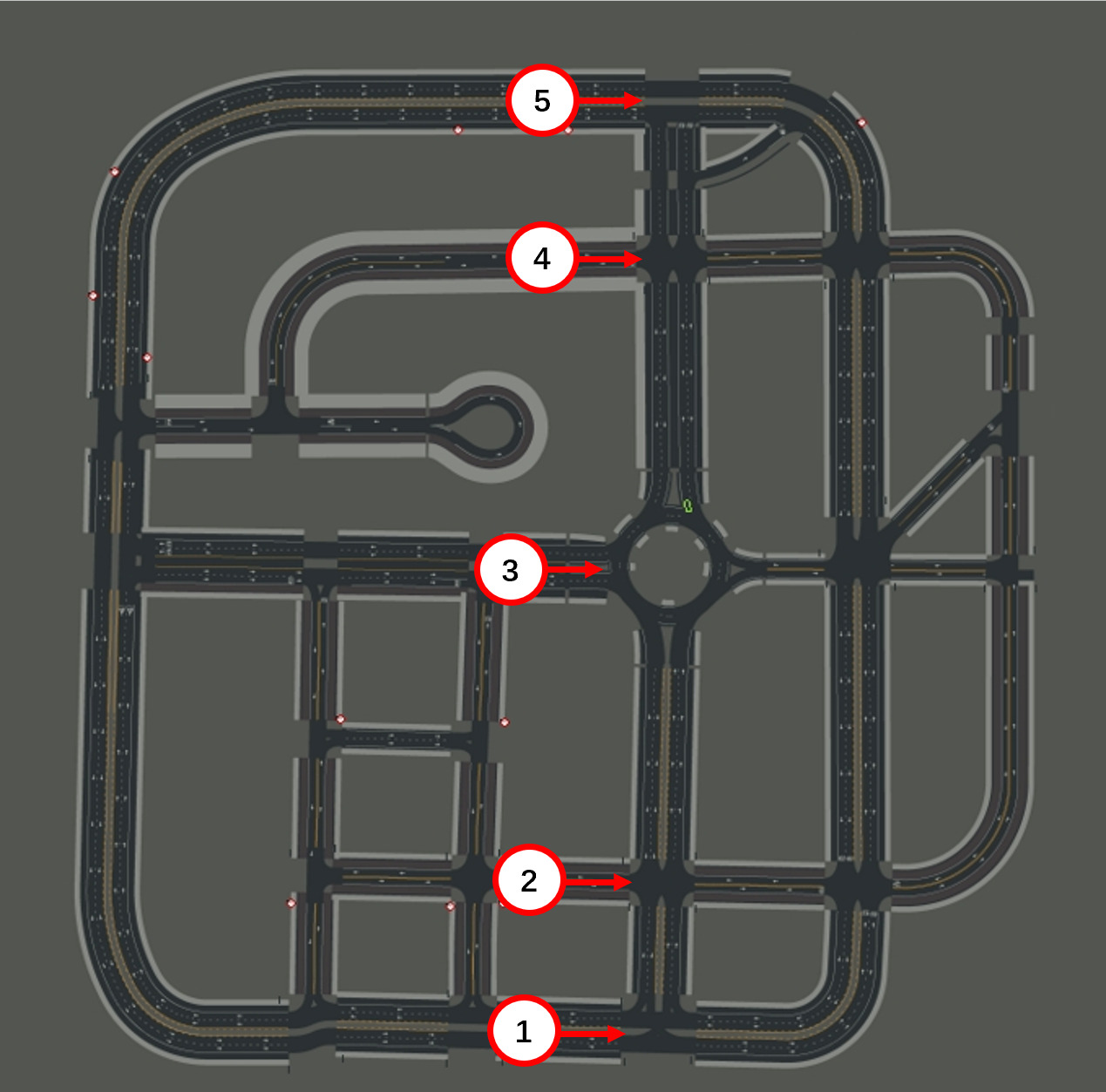

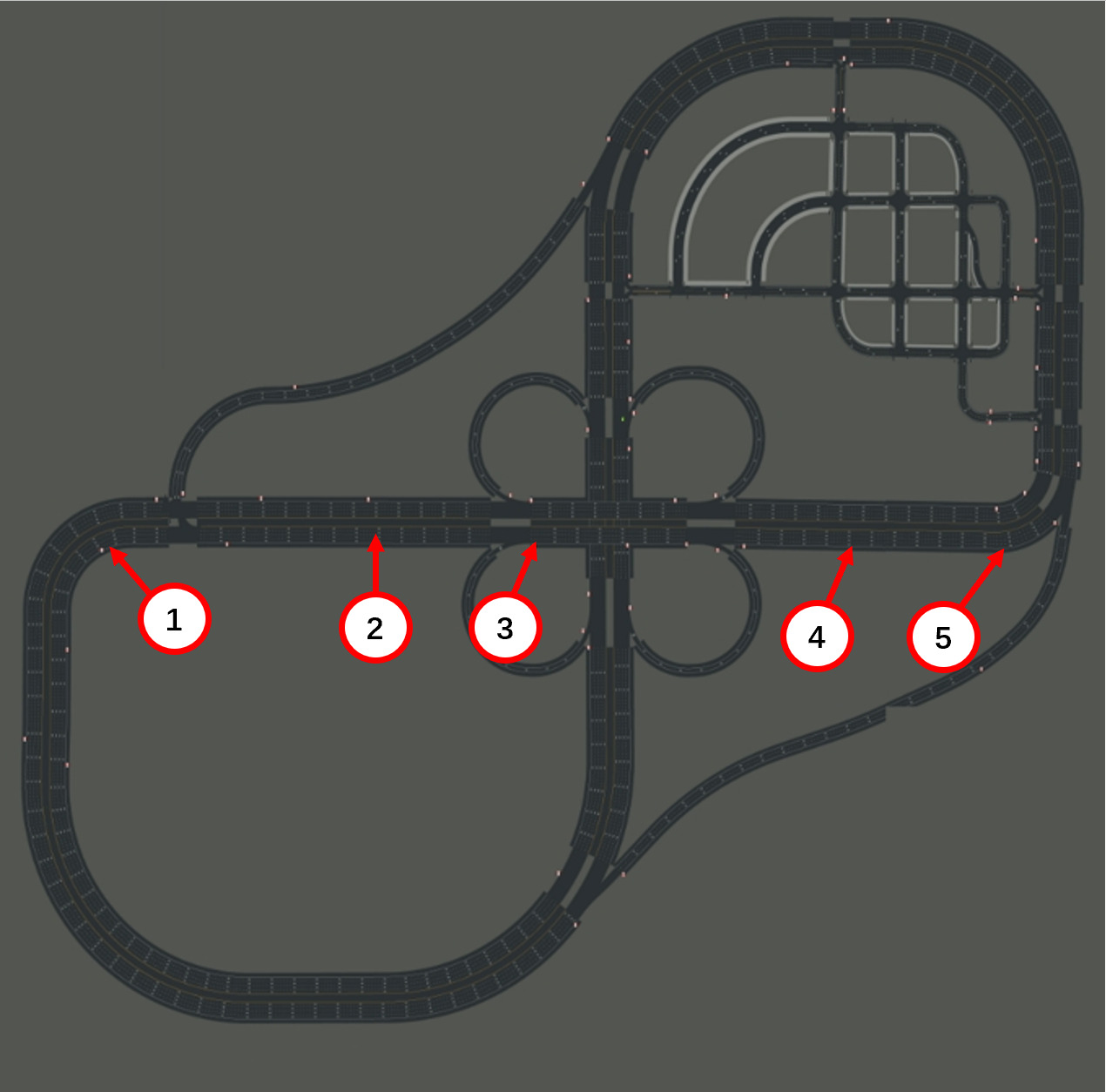

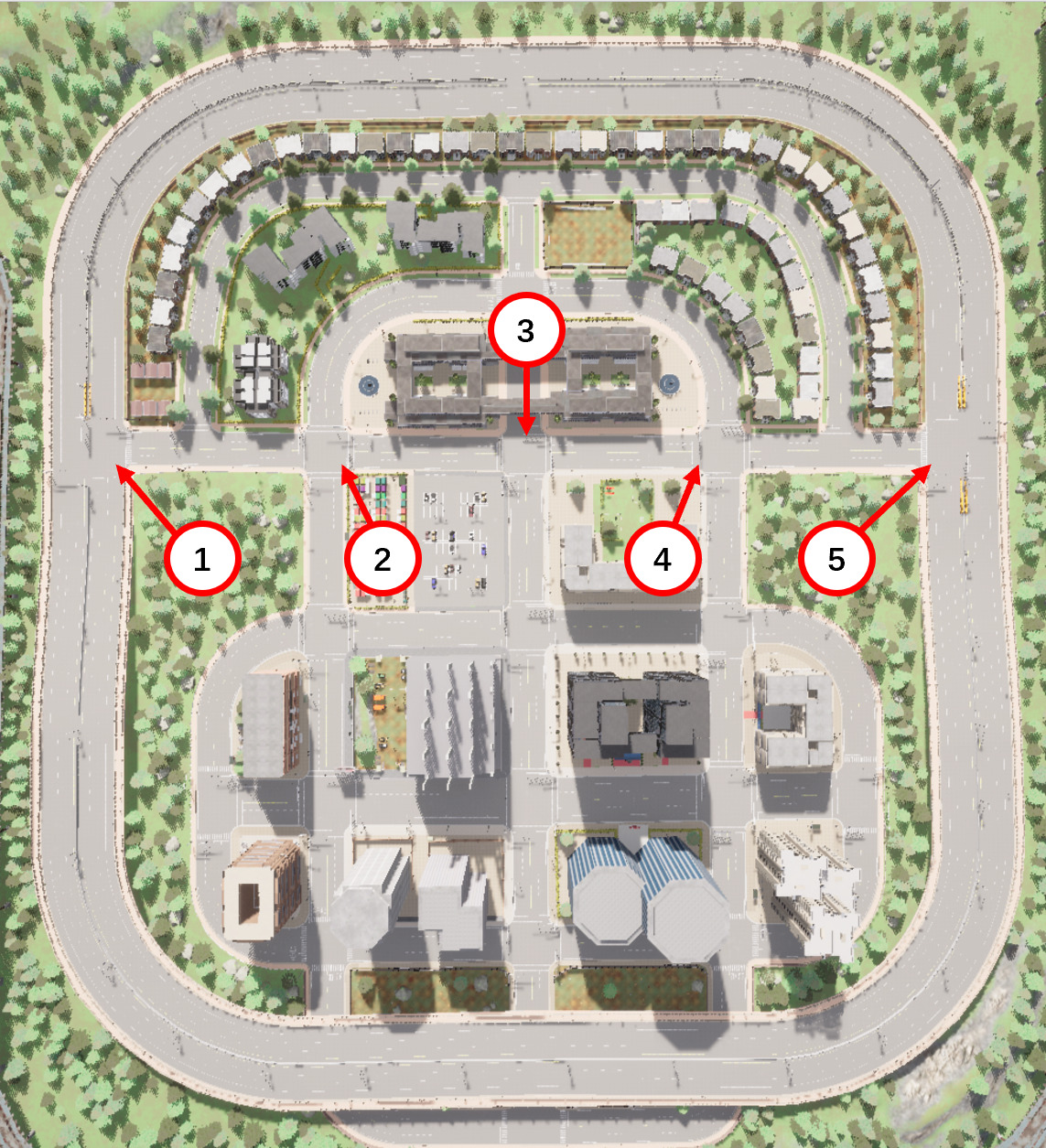

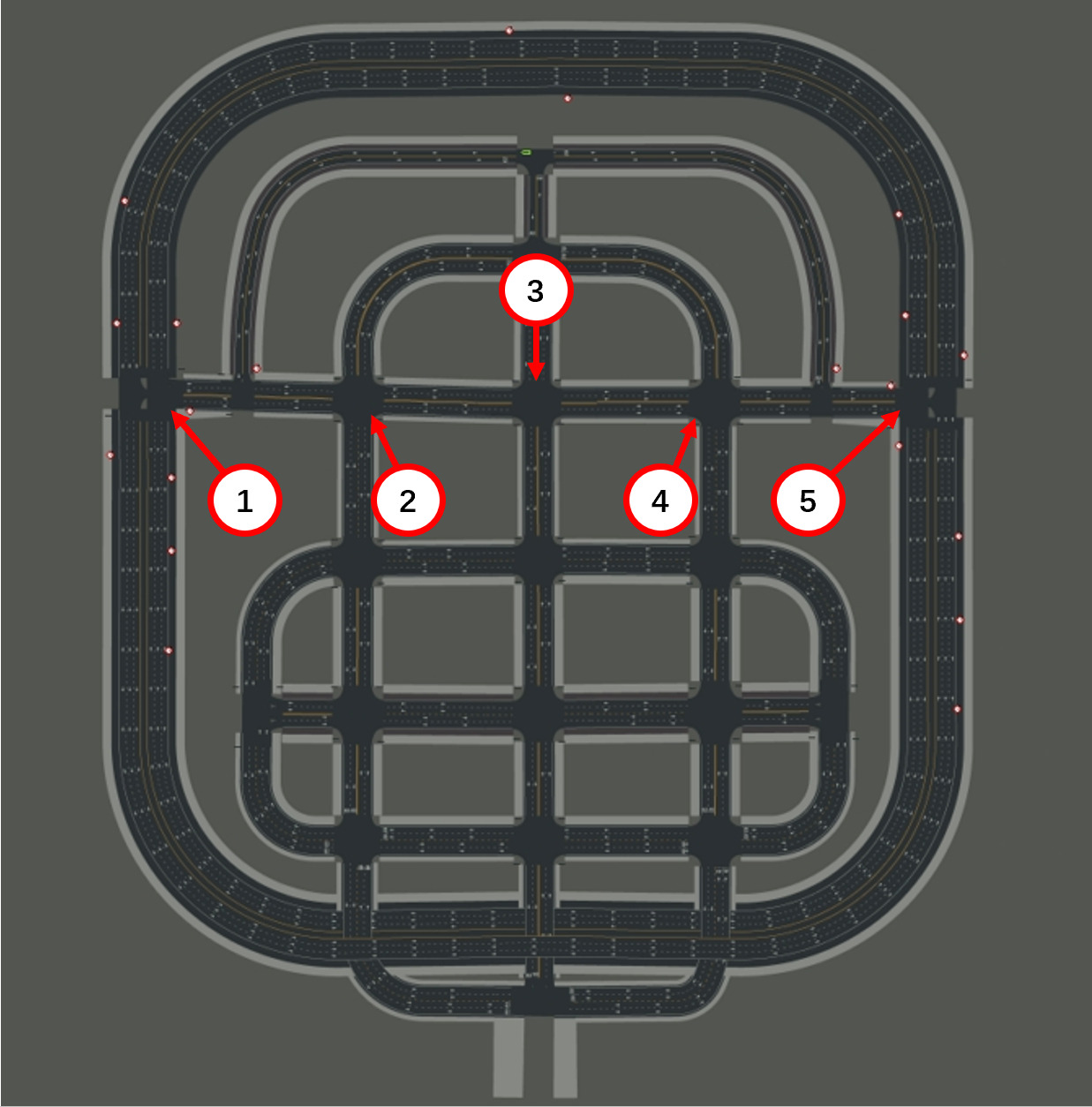

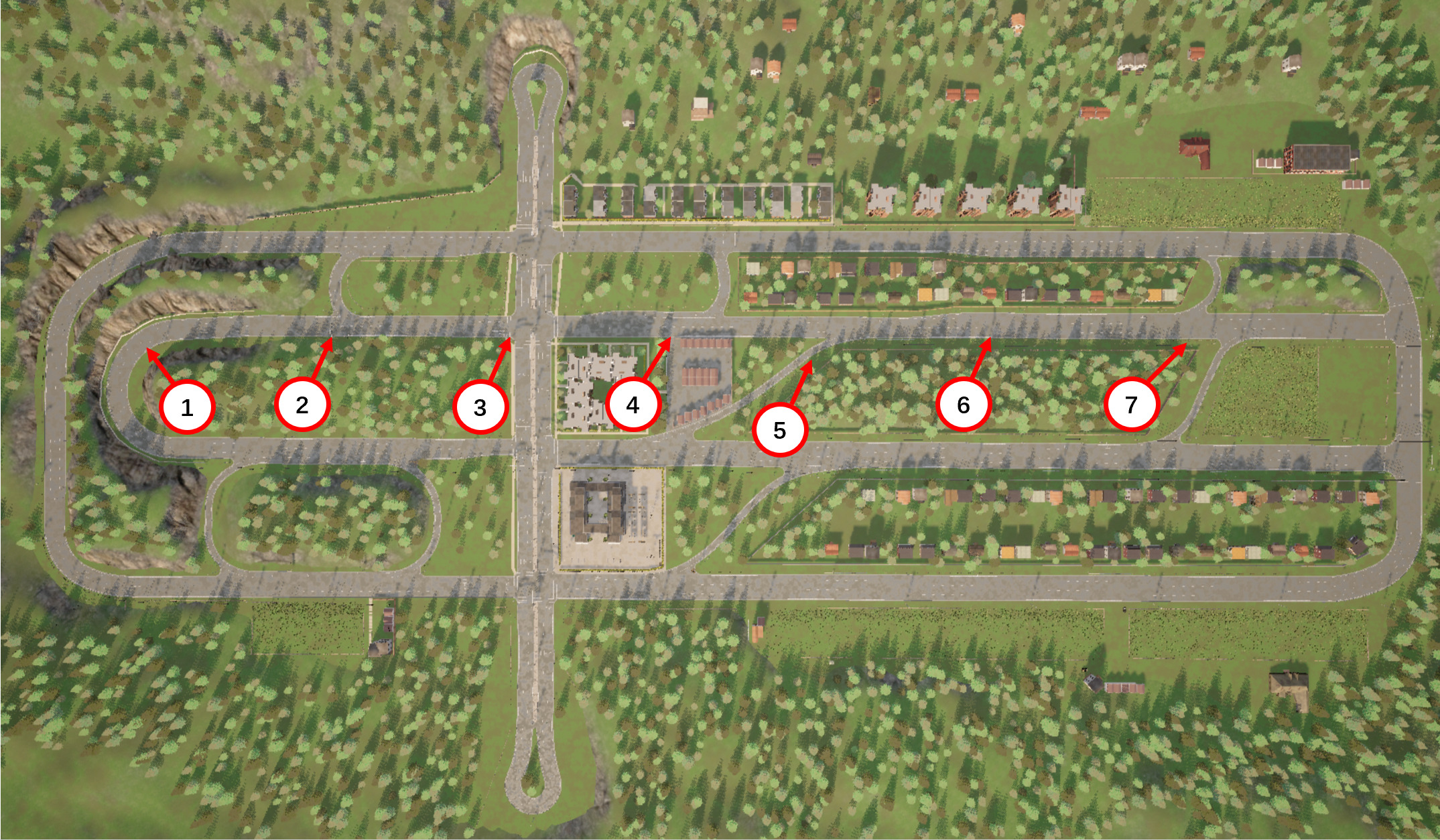

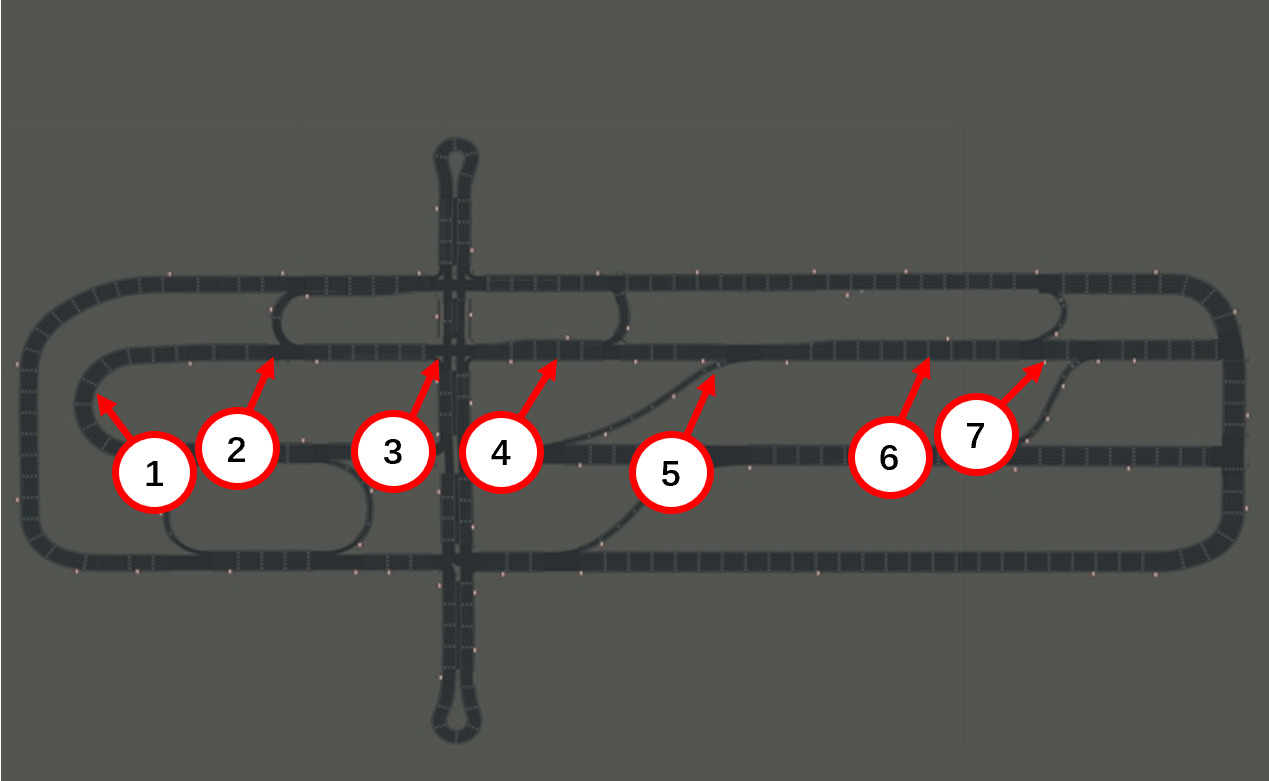

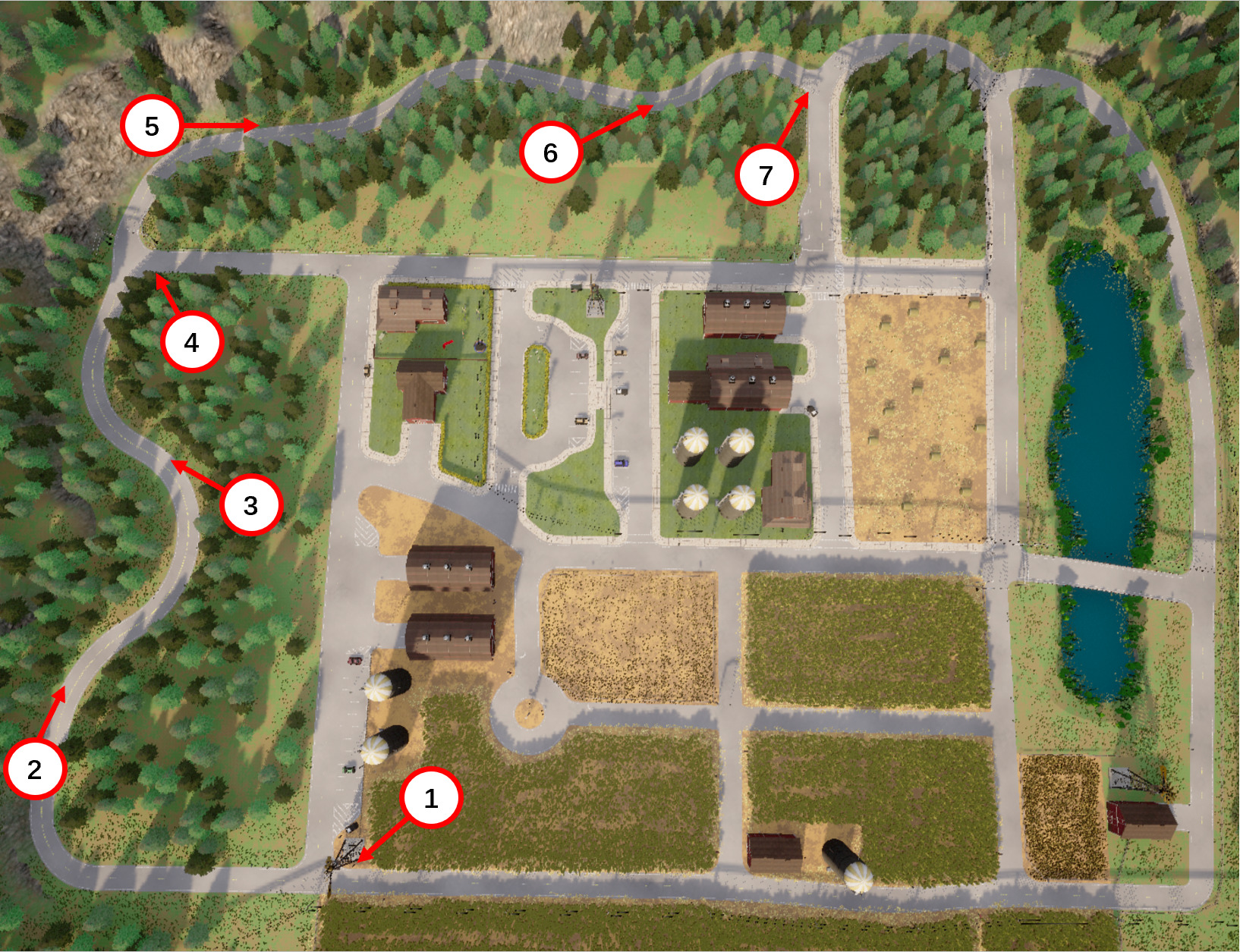

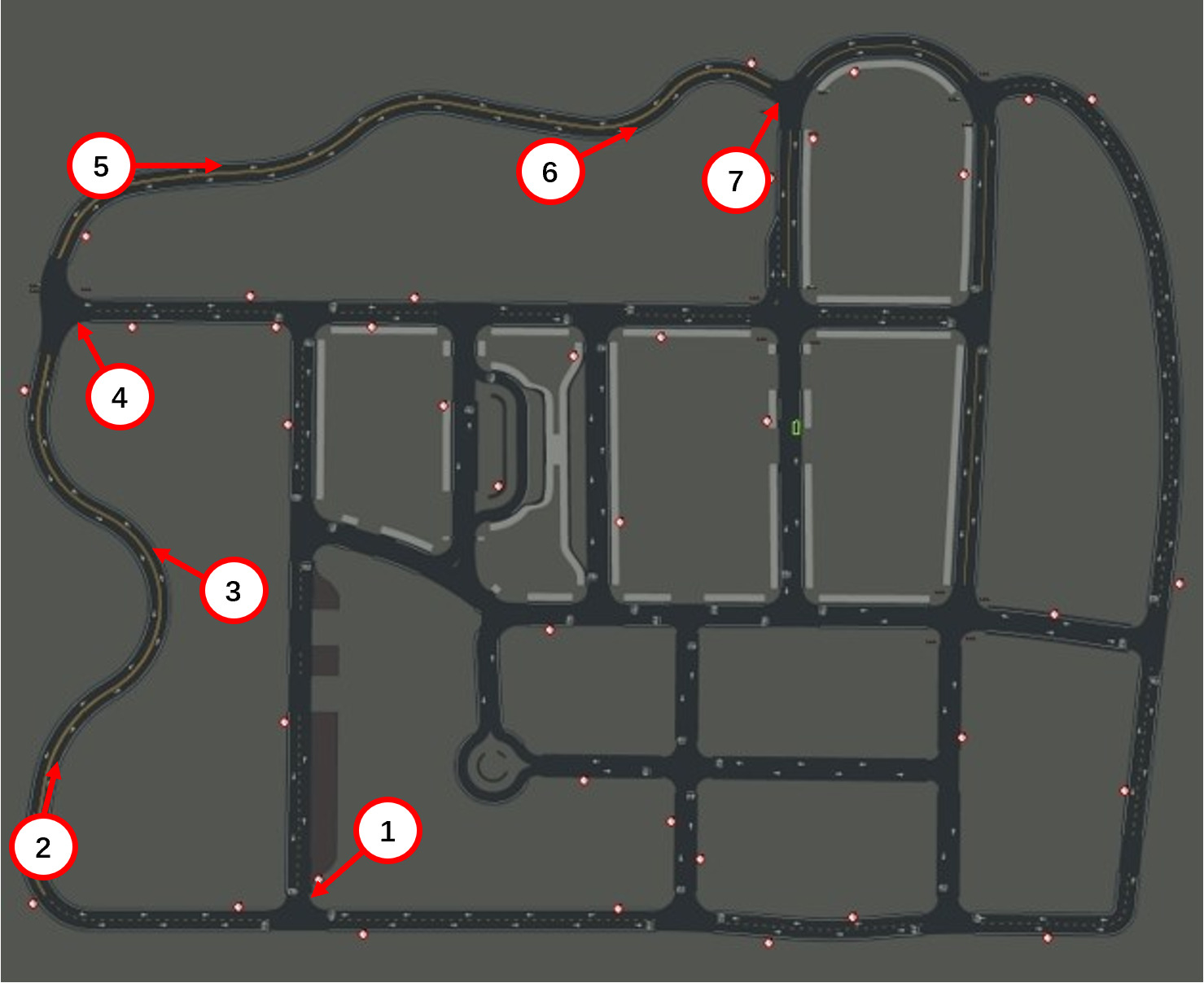

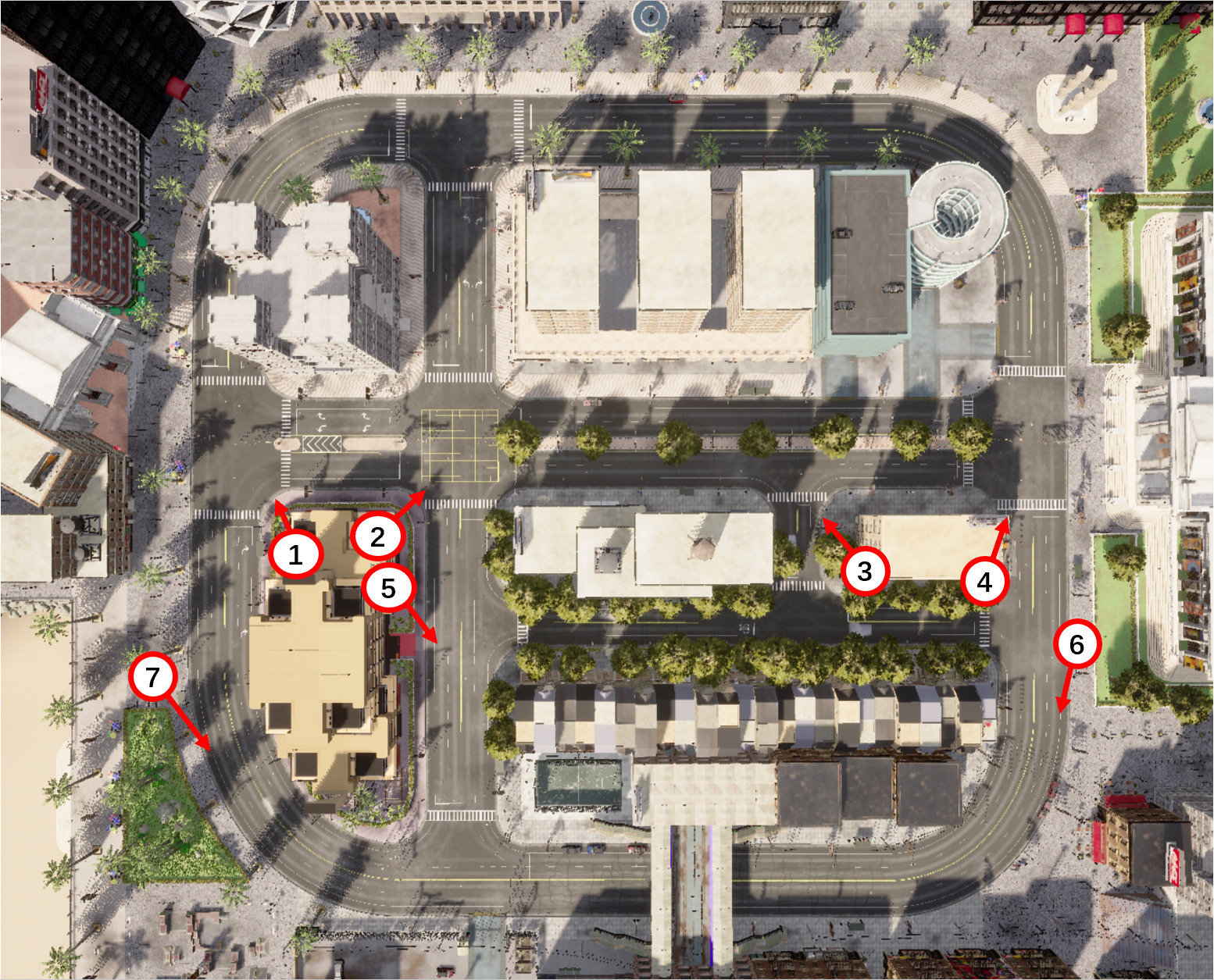

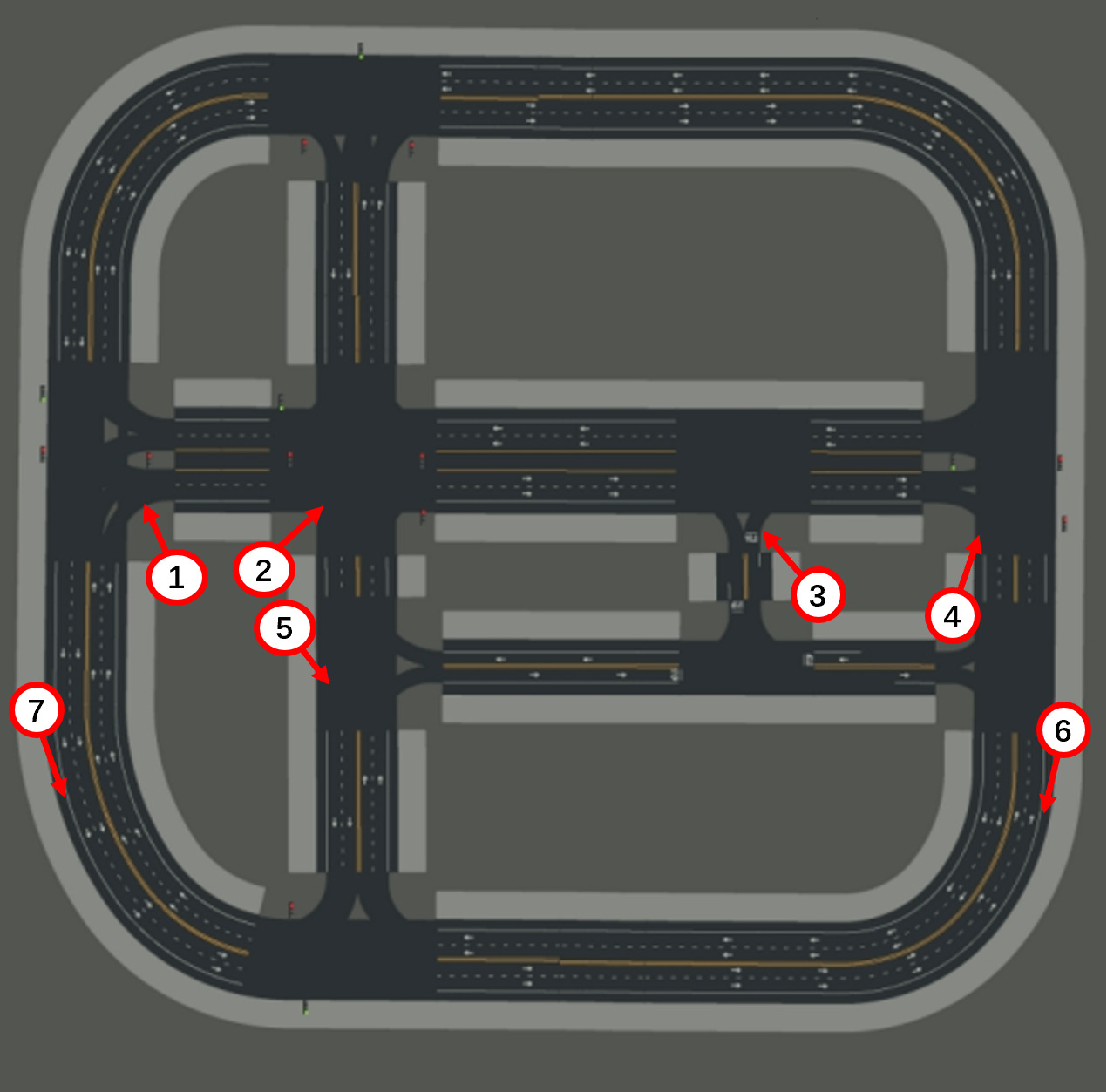

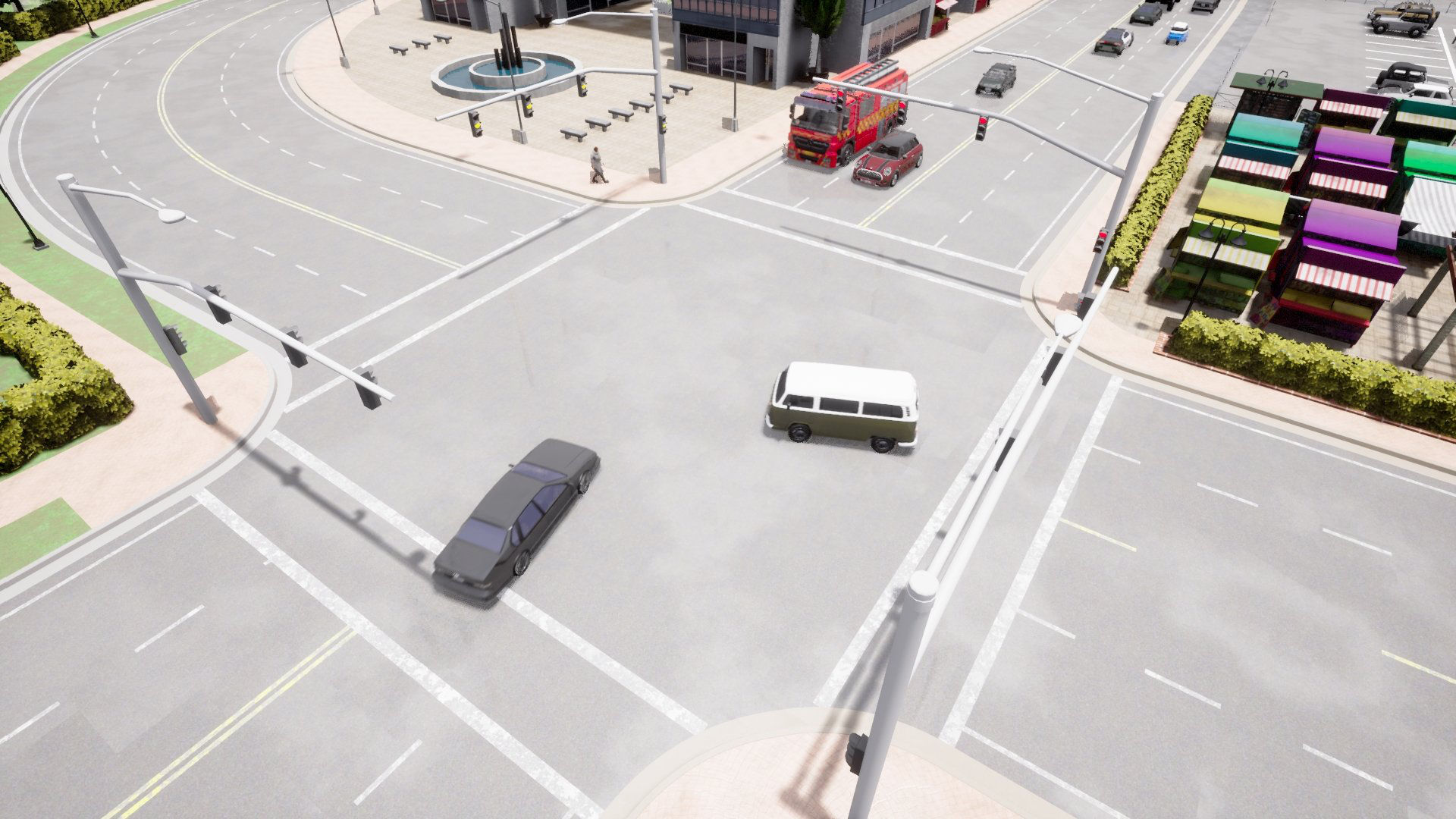

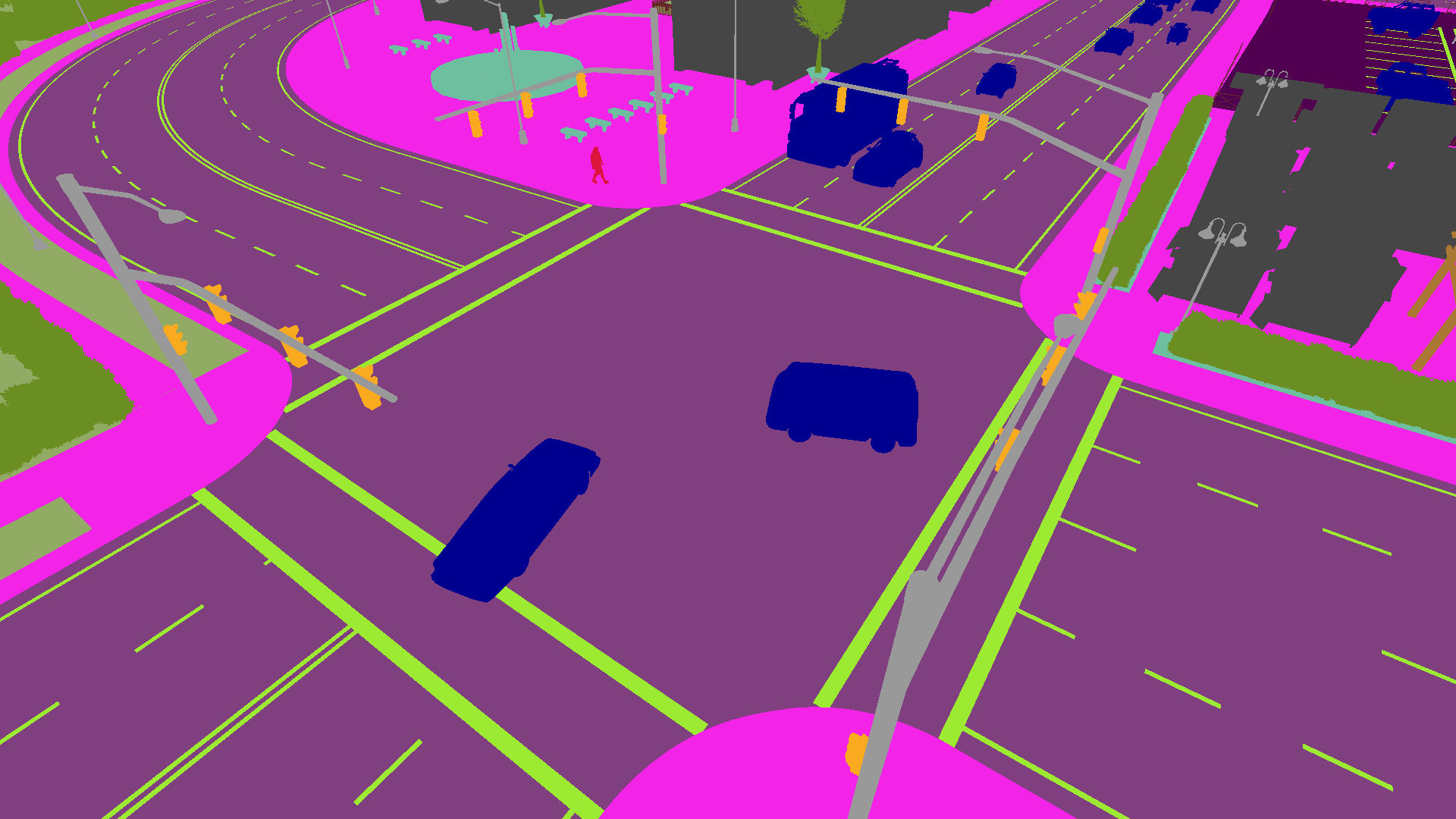

Smart City applications such as intelligent traffic routing or accident prevention rely on computer vision methods for exact vehicle localization and tracking. Due to the scarcity of accurately labeled data, detecting and tracking vehicles in 3D from multiple cameras proves challenging to explore. We present a massive synthetic dataset for multiple vehicle tracking and segmentation in multiple overlapping and non-overlapping camera views. Unlike existing datasets, which only provide tracking ground truth for 2D bounding boxes, our dataset additionally contains perfect labels for 3D bounding boxes in camera- and world coordinates, depth estimation, and instance, semantic and panoptic segmentation. The dataset consists of 17 hours of labeled video material, recorded from 340 cameras in 64 diverse day, rain, dawn, and night scenes, making it the most extensive dataset for multi-target multi-camera tracking so far. We provide baselines for detection, vehicle re-identification, and single- and multi-camera tracking. Code and data are publicly available.

Highlights

- Diverse Weather & Lighting

- 2D and 3D annotations

- instance, semantic and panoptic segmentations

- 340 Videos

- 64 scenes

- 17 hours 4,623,184 annotated bounding boxes

Ground Truth

RGB + Bounding Boxes

Semantic Segmentation